Common introduction to all sections

This is part 4 of 10 blog posts I’m writing to convey the information that I present in various workshops and lectures that I deliver about complexity. I’m an evaluator so I think in terms of evaluation, but I’m convinced that what I’m saying is equally applicable for planning.

I wrote each post to stand on its own, but I designed the collection to provide a wide-ranging view of how research and theory in the domain of “complexity” can contribute to the ability of evaluators to show stakeholders what their programs are producing, and why. I’m going to try to produce a YouTube video on each section. When (if?) I do, I’ll edit the post to include the YT URL.

| Part | Title | Approximate post date |

| 1 | Complex systems or complex behavior? | up |

| 2 | Complexity has awkward implications for program designers and evaluators | up |

| 3 | Ignoring complexity can make sense | up |

| 4 | Complex behavior can be evaluated using comfortable, familiar methodologies | up |

| 5 | A pitch for sparse models | 7/1 |

| 6 | Joint optimization of unrelated outcomes | 7/8 |

| 7 | Why should evaluators care about emergence? | 7/16 |

| 8 | Why might it be useful to think of programs and their outcomes in terms of attractors? | 7/19 |

| 9 | A few very successful programs, or many, connected, somewhat successful programs? | 7/24 |

| 10 | Evaluating for complexity when programs are not designed that way | 7/31 |

This blog post will give away much of what is to come in the other parts, but that’s OK. One reason it’s OK is that it’s never a bad thing to cover the same material twice, each time in a somewhat different way. The other reason it’s OK is that that before getting into the details of complex behavior and its use in evaluation, an important message needs to be internalized. Namely, that the title of this blog post is in fact correct. Complex behavior can be evaluated using comfortable, familiar methodologies.

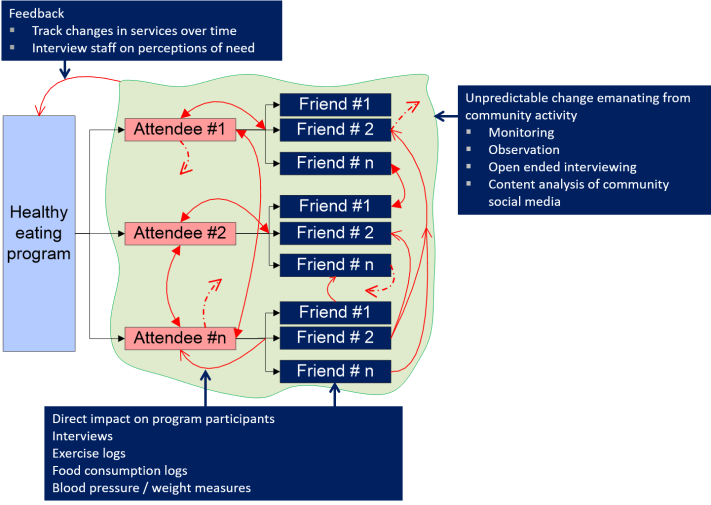

Figure 1 illustrates why this is so. It depicts a healthy eating program whose function is to reach out to individuals and teach them about dieting and exercise. Secondary effects are posited because attendees interact with friends and family. It is thought that because of that contact, four kinds of outcomes may occur.

- Friends and family pick up some of the information that was transmitted to program attendees, and improve their personal health related behavior.

- Collective change occurs within a family or cohort group, resulting in desirable health improvements, even though the specific changes cannot be identified in advance.

- There may be community level changes. For instance, consider two examples: 1) An aggregate improvement in the health of people in a community may change their energy for engaging in volunteer behavior. The important outcome is not the number of hours each person puts in. The important outcome is what happens in the community because of those hours. 2) Better health may result in people working more hours, and, hence earning more money. Income is an individual level outcome, but the consequences of increased wealth in the community is a community level outcome.

- To cap it all off, there is a feedback loop between the accomplishments of the program and what services the program delivers. So over time, the program’s outcomes may change as the program adapts to the changes it has wrought.

Even without a formal definition of complexity, I think we would all agree that this is a complex system. There are networks embedded in networks. There are community-level changes that cannot be understood by “summing” specific changes in friends and family. There are influences among the people receiving direct services. Program theory can identify health changes that may occur, but it is incapable of specifying any of the other changes that may occur. There is a feedback loop whereby the effects of the program influence the services the program delivers. And what methodologies are needed to deal with all this complexity? They are in the Table 1. Everything there are methods that most evaluators can either do themselves or can easily recruit colleagues who can.

| Table 1: Familiar Methodologies to Address Complex Behaviors | |

| Program Behavior | Methodology |

| Feedback between services and impact |

|

| Community level change |

|

| Direct impact on participants |

|

There are two exceptions to the “comfortable, familiar methodology” principle. The first would be cases where formal network structure mattered. For instance, imagine that it were not enough to show that network behavior was at play in the healthy eating example, but that the structure of the network and its various centrality measures were important for understanding the program outcomes. In that case one would need specialized expertise and software. The second case would be a scenario where it would further the evaluation if the program were modeled in a computer simulation. Those kinds of models are useless for predicting how a program will behave, but they are very useful for getting a sense of the program’s performance envelope, and testing assumptions about relationships between program and outcome. If any of that mattered, one would need specialized expertise in system dynamic or agent-based modeling, depending on one’s view of how the world works and what information one wanted to know.

One thought on “Complex behavior can be evaluated using comfortable, familiar methodologies – Part 4 of a 10-part series on how complexity can produce better insight on what programs do, and why”