Common Introduction to all 6 Posts

History and Context

These blog posts are an extension of my efforts to convince evaluators to shift their focus from complex systems to specific behaviors of complex systems. We need to make this switch because there is no practical way to apply the notion of a “complex system” to decisions about program models, metrics, or methodology. But we can make practical decisions about models, metrics, and methodology if we attend to the things that complex systems do. My current favorite list of complex system behavior that evaluators should attend to is:

| Complexity behavior | Posting date |

| · Emergence | up |

| · Power law distributions | Sept. 21 |

| · Network effects and fractals | Sept. 28 |

| · Unpredictable outcome chains | Oct. 5 |

| · Consequence of small changes | Oct. 12 |

| · Joint optimization of uncorrelated outcomes | Oct. 19 |

For a history of my activity on this subject see: PowerPoint presentations: 1, 2, and 3; fifteen minute AEA “Coffee Break” videos 4, 5, and 6; long comprehensive video: 7.

Since I began thinking of complexity and evaluation in this way I have been uncomfortable with the idea of just having a list of seemingly unconnected items. I have also been unhappy because presentations and lectures are not good vehicles for developing lines of reasoning. I wrote these posts to address these dissatisfactions.

From my reading in complexity I have identified four themes that seem relevant for evaluation.

- Pattern

- Predictability

- How change happens

- Adaptive and evolutionary behavior

Others may pick out different themes, but these are the ones that work for me. Boundaries among these themes are not clean, and connections among them abound. But treating them separately works well enough for me, at least for right now.

Figure 1 is a visual depiction of my approach to this subject.

Figure 1: Complex Behaviors and Complexity Themes |

- The black rectangles on the left depict a scenario that pairs a well-defined program with a well-defined evaluation, resulting in a clear understanding of program outcomes. I respect evaluation like this. It yields good information, and there are compelling reasons working this way. (For reasons why I believe this, see 1 and 2.)

- The blue region is there to indicate that no matter how clear cut the program and the evaluation; it is also true that both the program and the evaluation are embedded in a web of entities (programs, policies, culture, regulation, legislation, etc.) that interact with our program in unknown and often unknowable ways.

- The green region depicts what happens over time. The program may be intact, but the contextual web has evolved in unknown and often unknowable ways. Such are the ways of complex systems.

- Recognizing that we have a complex system, however, is not amenable to developing program theory, formulating methodology, or analyzing and interpreting data. For that, we need to focus on the behaviors of complex systems, as depicted in the red text in the table. Note that the complex behaviors form the rows of a table. The columns show the complexity themes. The Xs in the cells show which themes relate to which complexity behaviors.

Major Change from Minor Variation

| Pattern

|

Predictability

|

How change happens | Adaptive evolutionary behavior | |

| Emergence | ||||

| Power law distributions | ||||

| Network effects and fractals | ||||

| Unspecifiable outcome chains | ||||

| Consequence of small changes | X | X | ||

| Joint optimization of uncorrelated outcomes |

In one of the other posts in this series I made the point that while small changes may affect intermediate outcomes, the pattern of those intermediate outcomes does not affect which long term outcomes are achieved. Here I make the opposite point, i.e. that small, seemingly insignificant events can result in a program developing along a radically different trajectory. Both of these behaviors can be characteristic of complex systems. Under some conditions, continual instability can be contained within a defined set of boundaries. (The terms “basin of attraction” and “attractor” are often used to describe this phenomenon.) Under other conditions, a seemingly insignificant fluctuation can set a system on a radically different trajectory. Or put another way, a small change can lead a program to adapt to its environment by evolving along a previously unrecognized path.

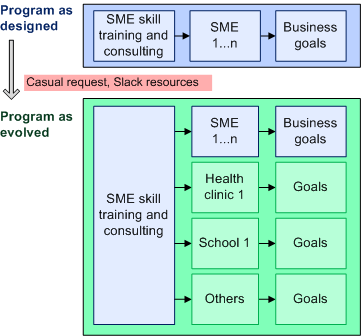

Figure 2: Evolutionary Divergence from Small Change |

Does this matter from an evaluation point of view? Maybe not if the origins of change don’t matter. It may make no difference why a program initially diverged from its original plan, or precisely when. In many (maybe even most) cases, all that matters is what the program looks like once substantial change occurred, and any new outcomes that may have manifested themselves.

There may however, be cases where the origins of change do matter because there are implications for program theory and for evaluation methodology. I constructed the example in Figure 2 to illustrate these points. The top of Figure 2 (in blue) depicts a program designed to assist small and medium sized enterprises (SME) by providing training and consulting in topics such as strategic business planning, personnel management, marketing, inventory and supply chain management, customer relationships, quality control, financial systems, and other related topics. The logic is simple and straightforward. Training and consulting result in SMEs that are better able to meet their business goals.

The bottom of Figure 2 (green) depicts the long term consequence of a casual request by someone in a local health clinic who knows about the SME program, thinks that some of the skills would be useful in the clinic, and asks a staff member for a little help. Of course in most cases a little bit of help would be given on a one-time basis, the health clinic would benefit a bit, and that would be the end of it.

But there is another possibility. It’s not inconceivable that the experience with the health clinic would lead the program to deliberately exploit similar opportunities. For that to happen, a lot would have to line up. For instance: 1) Other organizations would have to know that the opportunity existed. 2) Staff would have to have more than “a little bit of slack time”. 3) Funders would have to approve of what is in essence, a change in the original mission of the program. 4) Enough of the training provided by the program would have to have wide appeal. 5) Program staff, and its management, would have to embrace the expansion. Of course this is not the only sequence of events that might lead to program evolution stemming from that initial small change. With a little effort it would be easy to imagine other paths. For instance, initial success may lead community organizations to lobby program funders for an expansion of the services provided by the SME support program, and those funders may be in a positon to see the expansion as being in their interest.

From a complexity point of view, this story carries three messages. First, in cases like this a lot has to line up for an initial change to result in a major program evolution. Most of the time a small event will not lead a system diverging along a novel path. The effect of most small changes usually dampen. The butterfly flapping its wings almost never causes a hurricane. Second, there are multiple paths that can lead to the same evolutionary path.

Finally, the scenario in Figure 2 is different from the classic view of “initial conditions”. There, the focus is on small change in a deterministic nonlinear system, where that change alone is enough to drive major changes in the system over time. (This is where the “butterfly effect” came from. Edward Lornez came up with it as a result of his work on how small rounding errors in weather models could result in major changes in the output of the models.)

In Figure 2, a series of events has to take place in a particular sequence. Each event may be low probability, and the joint occurrence of those events makes for an even lower probability. One might be reasonably confident that some particular sequence of events might occur to produce the evolutionary path shown in the bottom of Figure 2, but: 1) which particular sequence cannot be foreseen, 2) other evolutionary paths are no less likely than the one observed, and 3) most events will have no consequence whatsoever.

Are there evaluation implications to the phenomenon I just described? Probably not. After all, once a change in a program has been recognized, it can be evaluated. Also, who cares what that initial small change was? At the time it occurred it was inconsequential. Still, it’s safest to take the position that there are implications for doing evaluation because there are consequences for program theory and for methodology.

Program theory: A change of the kind I described may indicate that the initial program theory was incorrect. That’s worth knowing for future planning. In this case there are aspects of SME support that are more broadly applicable than originally assumed. Second, something is learned by knowing the evolutionary path. It is true that multiple events could have aligned to support the same evolutionary path. But knowing a path that worked could provide a lot of valuable understanding and insight.

Methodology: One implication for methodology deals with the lead time between detecting a need to evaluate, and the implementation of evaluation activity. Evaluating the evolved program depicted in my scenario may require some difficult changes in the evaluation design. In these situations, it is important to have as much time as possible between detecting the need for a change in the evaluation design, and implementing those changes. Imagine that the bottom part of Figure 1 could be evaluated by using a semi-structured interview with people as they received support, and then again shortly thereafter. That’s easy and cheap. But suppose the evaluation had to: 1) draw from baseline interviews prior to support being provided, 2) use an industry-specific validated survey, and 3) draw comparisons with other organizations that did not receive program services. None of these evaluation elements could be implemented easily. All would require considerable advance planning, quite a bit of effort, and budget. The sooner the need for such changes in the evaluation are realized, the better. In the spirit of shameless self promotion, allow me to point out that my book on unintended consequences deals with these subjects in depth.

A second implication for evaluation is the realization that while particular evolutionary paths may be unpredictable, it is a safe bet that program managers and planners will have opportunities to make decisions that will take their program along some kind of an evolutionary path. Given that high likelihood, it would seem prudent to always include an evaluation component that was sensitive to decision making and policy setting practices.

Another perspective on small change in complexity.

In this post I downplayed the consequences of small change because I wanted to make a particular point about the evaluation implications of how programs may sometimes evolve. It’s important to keep in mind though, that nonlinear change is a fundamental characteristic of complex systems. Small change can result in an outsized effect, and a seemingly large change may have no effect at all. For an example of how this phenomenon can have consequences for evaluation, see my discussion of network change in: Drawing on Complexity to do Hands-on Evaluation (Part 3) – Turning the Wrench. For a good overview of key concepts in complexity, go to: A simple guide to chaos and complexity.

Thanks to Mat Walton for pointing out the error of my ways on previous drafts of this post.