Recently I was asked to prepare a brief presentation for people in the prediction business – planners and evaluators whose work was preoccupied with some form of the question: If I do this, what will happen? The audience brought a traditional if > then logic to the way they answered this question. They knew that many forms of this relationship were needed – 1:1, 1:many, many:1, and many:many. They knew that feedback loops were present. They knew that environmental influences should be considered. But whatever the configuration, the logic involved an if > then belief in causation.

Complexity Science, however, reveals other ways to look at relationships. The objective of the presentation was to sensitize the audience to those other possibilities, to make them think that sometimes they may want to think differently. If > then logic still prevails, but a complexity perspective provides a different conceptualization of what “if” mean and what “then” means.

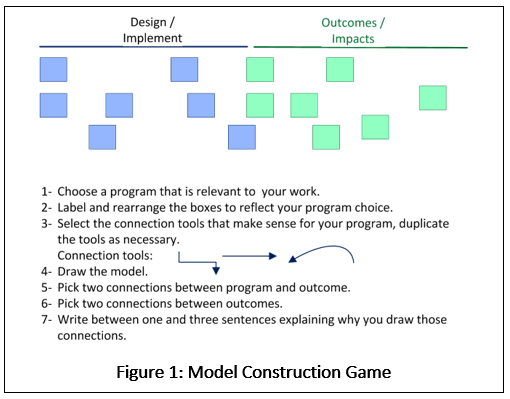

To succeed I had to identify a small number of concepts that people could apply to think differently and would pique people’s interest in learning more. I settled on two: 1) emergence, and 2) sensitive dependence. To explain the implications of these two constructs, it helps to begin with a game (Figure 1).

Whatever the specifics of your model, it would convey a three-stage narrative:

1- Implement these actions.

2- Make sure you implement them in this specific order.

3- If you do steps one and two, these outcomes will occur.

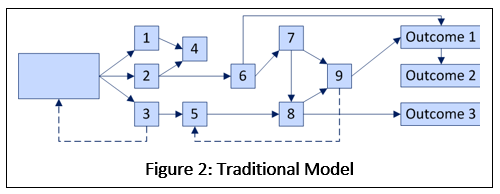

The details of each model will be different, but qualitatively each model would look something like Figure 2.

Invoking emergence and sensitive dependence, however, would yield a model along the lines of Figure 3. The narrative would be:

Invoking emergence and sensitive dependence, however, would yield a model along the lines of Figure 3. The narrative would be:

1- Implement a lot of these actions, and

2) these outcomes will happen.

What is it about emergence and sensitive dependence that are so critical to thinking about implementation and outcome? Aren’t other aspects of Complexity Science relevant? They are. But emergence and sensitive dependence are the fundamental reasons why reasoning about program outcomes should often turn away from the logic of Figure 2 and embrace the logic of Figure 3. After the discussion of emergence and sensitive dependence, I will discuss some other constructs that enrich our ability to apply emergence and sensitive dependence in actionable ways that yield guidance for the design and evaluation of activity that is intended to make real differences in the real world.

What is it about emergence and sensitive dependence that are so critical to thinking about implementation and outcome? Aren’t other aspects of Complexity Science relevant? They are. But emergence and sensitive dependence are the fundamental reasons why reasoning about program outcomes should often turn away from the logic of Figure 2 and embrace the logic of Figure 3. After the discussion of emergence and sensitive dependence, I will discuss some other constructs that enrich our ability to apply emergence and sensitive dependence in actionable ways that yield guidance for the design and evaluation of activity that is intended to make real differences in the real world.

Emergence

Emergence is “A process by which a system of interacting subunits acquires qualitatively new properties that cannot be understood as the simple addition of their individual contributions.” (Definition courtesy of the glossary at Complexity Explorer.)

Let’s consider emergence and compare two scenarios. The first is a cylinder operating in the engine of a car (Figure 4.) People know what a cylinder is – its materials, its design, and its function. They also know how it contributes to the operation of the car. We all agree that a car is greater than the sum of its parts. But with a car, the parts can be identified and moreover, the function of those parts is always known. Parts do not lose their identity.

Now imagine the draw of a large urban area, a concept that for lack of a better word I’ll call “urban vitality”. (See Figure 5 for an evocation of what warms the heart of this New Yorker.) What is “urban vitality”? The answer is a combination of a host of factors, e.g., walkability, ethnic diversity, transportation, business opportunities, variety of cultural opportunity, and probably, a lot more. Moreover, while these variables are correlated, the experience of “urban vitality” may result from different combinations and degrees of these factors. Whatever “urban vitality” is, it is some kind of holistic construct that cannot be understood by inspecting its parts. This is a case of “emergence”.

From the point of view of planning and evaluation the difference is profound. In the case of the car, one can identify a part (the cylinder), observe its operation, judge how well it is operating, and make a judgement about the effect of the operation of the cylinder on the operation of the car. Were I to propose a design or materials change that affected the power output of the cylinder, I would be able to draw a causal connection between the change in the cylinder and the change in the car.

In the urban case it would be a good idea to measure each of the constituent parts, but one could not make a judgment as to how each of those components affected urban vitality. For that, one would need a global measure that would be different from any metric that described any of the component parts. As an example, one might consider “real income”, which captures buying power.[1] The same amount of money will buy a lot more housing in Detroit than it will in New York City. So why are so many people so willing to pay New York prices? Or put differently, what is it about New York that pulls so many people to it? Were I to implement a better subway scheduling system I could measure the effect on subway travel. But I could not draw a causal connection between the improved scheduling and the amount of urban vitality.

Note how the urban vitality example is phrased in terms of causation and not in terms of methodology. If I had the right data, at least in theory I could calculate a correlation between subway performance and real income. But even if I did, I would have no theory of action that would allow me to use that measure of association to inform further action. The best I could say would be: “making the subway schedules better is a good thing to do if I want to improve urban vitality.” This brings us to sensitive dependence.

Sensitive Dependence

Sensitive dependence is “A system’s sensitivity to initial conditions refers to the role that the starting configuration of that system plays in determining the subsequent states of that system. When this sensitivity is high, slight changes to starting conditions will lead to significantly different conditions in the future. Sensitive dependence on initial conditions is a defining property of chaos in dynamical systems theory.” (Definition courtesy of the glossary at Complexity Explorer.)

Figure 2 is built on the assumption that it is possible to draw an unambiguous path from the beginning of the model to the end. One might argue whether the connections are correct. I might claim that “A” causes “B” and you might argue that “A” does not cause “B”, but that it does cause “C”. But common to our points of view is that it is possible to draw such a connection.

Sensitive dependence suggests that we might both be wrong. It may be impossible to specify such connections, as depicted in Figure 3. The problem is that small fluctuations in the behavior of any single element can affect the trajectory through the model. Local variation matters, and local variation is unavoidable. This does not mean that all programs are doomed because even the slightest deviation will lead to failure. Many paths may be successful. Also it is entirely possible that a system is robust in the face of small deviations. Degrees of sensitivity and number of successful paths are empirical questions. (In fact most systems are stable. That’s why it is so hard to bring about change or for evaluation to be used. It makes sense that Nature would have evolved our world into stability. Otherwise social organization could not exist.)

Planning and Evaluating When Emergence and Sensitive Dependence are Operating

What are planners and evaluators to do if they cannot predict a path and when the outcome being measured is something other than “the sum of its parts”? How can they lay plans and how can they use evaluation to determine the effectiveness of those plans?

What they can do is to use experience and research to identify important elements, and to implement as many of them as possible. The program theory would be: “Our desired emergent behavior will manifest if we implement as many relevant elements as possible. We cannot measure outcome success as a function of its constituent parts, but we can measure it as a construct in its own right.”

That is an uncomfortable theory because it provides no guidance as to the order in which implementations should take place, or how many of them are needed. To take the previous example. It may not be possible to identify causal relationships between subway scheduling and urban vitality, but it is eminently reasonable to believe that more frequent on-time subway service plays a role in the emergent phenomenon of urban vitality. Planning like this leads us to the model in Figure 3. Figures 2 and 3 present us with two competing approaches to identifying what causal knowledge can be known about the programs we implement. These competing approaches are summarized in Table 1.

| Table 1: Comparison of Traditional and Complexity Based Models | |

| Traditional model | Complexity based model |

| Implement these actions. | Implement a lot of these actions. |

| In this order | —- |

| These outcomes will manifest. | These outcomes will manifest. |

Complexity Constructs that can Decrease Ambiguity

“Emergence” and “sensitive dependence” are important because they orient thinking away from the belief that Nature always presents unambiguous enduring causal models that lead from program to outcome. If planners and evaluators know nothing more about complexity, these concepts suffice to turn their attention to a different planning logic. However, it is important to appreciate that there are constructs that have connections with Complexity Science that do provide actionable guidance about what should be done, when, and how evaluation can inform further action.

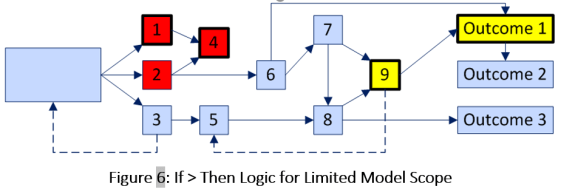

Model Scope: The reason for not trusting the model in Figure 2 is its long outcome chains. Because local variation can change trajectories, and local variation can take place all along the model, there is every reason to believe that a predicted path will not come to pass. But now consider Figure 2 recast as Figure 6.

The only difference between Figure 2 and Figure 6 is that colors and border weights have been used to illustrate how proximity may affect uncertainty. There can be little certainty of the path between “ action 1” and “Outcome 1” because all the intermediate elements are subject to so many local fluctuations, each of which can change the path’s trajectory. But now consider the colors. There is minimal distance between actions 1 and 4; and between action 9 and Outcome 1. Thus it would be defensible to reason about those proximate relationships in terms of traditional if > then logic. Why? Because there are fewer links in the logic chain to present opportunity for local variation which can affect long term trajectories.

Priorities for implementation and evaluation: It is true that long term causal relationships cannot be specified in advance. That truth does not imply, however, that the advice to “implement a lot of these actions” (Table 1) means that priorities should be picked at random. There is always a body of research and evaluation to draw from. There is always wisdom and experience to guide action. Emergence and sensitive dependence speak to how we should reason about the consequences of our actions, not about our priorities for action.

The elements in all the figures are generic, but actual program design identifies them. Drawing on the urban example, we know that public transportation is important, and consequently, that efforts to increase urban vitality should include improvements in transportation. Another component of urban vitality is public safety, thus dictating the need to do something about that as well. So the dictum “do a lot of these actions” should refer to activity within domains. Action needs to be taken to improve transportation. Action also needs to be taken to improve public safety. The lesson of complexity is that we may not be able to specify what specific action must be taken within those domains, only that action must be taken.

Lessons of History: One cannot predict what will happen. It is possible however, to discover what has happened. That knowledge is particularly valuable because of its proximity to the planning and evaluation work that is under consideration. It is a high priority for inclusion in the larger body of research and evaluation that need to be part of priority setting.

Summary

The lesson of Complexity Science for planners and evaluators is that our common, familiar, comfortable mode of reasoning about causal chains from programs to outcomes may not comport with the complex realities of how such programs work. If emergent behavior characterizes outcomes, then the role of the components of those outcomes cannot be uniquely determined. If sensitive dependence is operating, a single, predictable, replicable path from program implementation to outcome cannot be specified. This is not to say that change is random, or that planning should not be deliberate, or that evaluation cannot be informative. It is to say that there is a more realistic way to reason about how programs are implemented, how they change, and how success should be measured.

[1] I got this idea from Edward Glaser’s book: Triumph of the City: How Our Greatest Invention Makes Us Richer, Smarter, Greener, Healthier, and Happier